ETL process steps

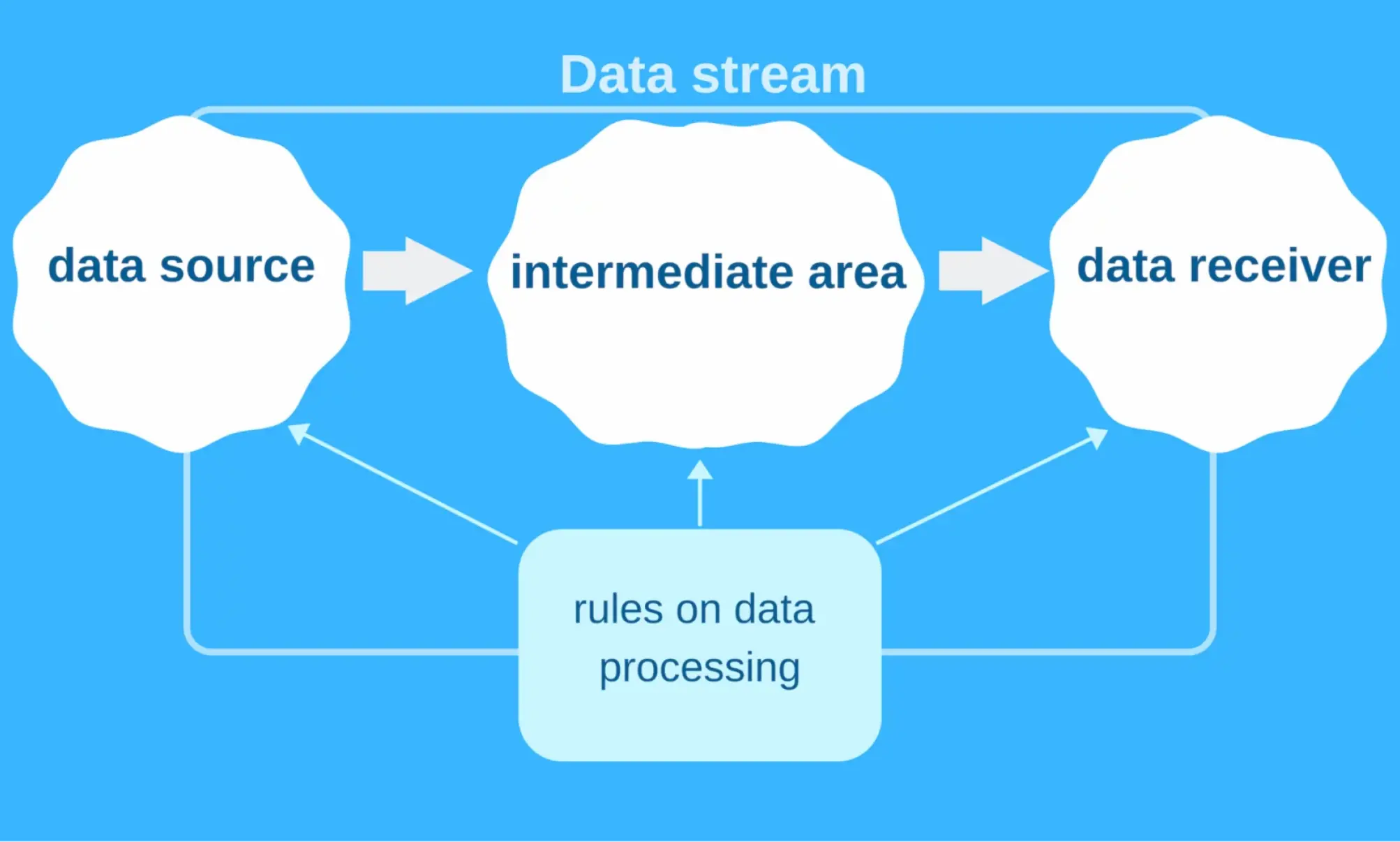

A web programmer can think of an ETL architecture as a 3-part system that includes:

- a data source

- an intermediate area

- a data receiver

A data stream is the movement of data from the source to the receiver. Each of the stages can be quite complicated, and ETL software development faces multiple challenges, including:

{{rr10-1="/custom-block-to-blog/two-page"}}

That's why an IT company needs to have a clear picture of the structures of the source and destination applications.

What is an ETL example?

The common ETL task is to transfer data from RDBMS to another database that works better for business intelligence tools. ELT jobs are divided into 2 types:

{{rr10-2="/custom-block-to-blog/two-page"}}

Taking data from RDBMS is an example of a <medium>stream job<medium>. The data points are transferred separately for further processing. <medium>A batch job<medium> can take the whole file, process it, and save it into another large file. Various ETL systems cope with these tasks in different ways.

The batch-only approach is getting old, as the growing number of streaming data sources encourage the use of ETL tools for stream jobs. They make the most recent data available quickly.

The variety of conventional and cloud-based data integration tools makes this choice difficult. I have created a list of five reliable ETL tools to help you make the right decision.

1. Apache Nifi

<medium>Pricing:<medium> free

<medium>Official website:<medium> https://nifi.apache.org/

<medium>Useful resources:<medium> documentation, tutorials

<medium>Pros:<medium>

- Perfect implementation of dataflow programming concept

- The opportunity to handle binary data

- Data provenance

<medium>Cons:<medium>

- Simplistic UI

- Lack of live monitoring and per-record statistics

The first on the list of the best ETL tools is an open-source Apache NiFi. Developed by Apache Software Foundation, it relies on Dataflow Programming. This ETL tool enables visual program assembly from boxes that can run almost without coding. You don't have to know any programming languages to use this tool.

One of the most popular open-source ETL tools can work with different sources, including RabbitMQ, JDBC query, Hadoop, MQTT, UDP socket, and more. You can filter, adjust, join, split, enhance, and verify data.

Apache NiFi is written in Java and distributed under the Apache 2.0 license. It runs on JVM and supports all JVM languages.

This ETL tool helps to create long-running jobs and suits for processing both streaming data and periodic batches. Manually managed jobs are also possible, though their set up can be challenging.

Apache NiFi is considered one of the best open-source ETL tools because of its well-rounded architecture. It's a powerful and easy-to-use solution. FlowFile includes meta-information, so the tool's capabilities aren't limited to CSV. You can work with photos, videos, audio files, or binary data.

The processors include 3 outputs:

{{rr10-3="/custom-block-to-blog/two-page"}}

If you want to drop terminated outputs, you can use special checkboxes. You should pay attention to the Process Groups. They are necessary for combining elements of a complex dataflow in advanced ETL programming.

Another great feature is the possibility of using various queue policies (FIFO, LIFO, and others). Data Provenance is a connected service that records almost everything in your dataflows. It's very convenient because you can see how the data was saved or processed. However, this function requires lots of disk space.

Some users complain about the Apache NiFi interface. It isn't impressive, but the usability is good enough. The UI has a simple, clean design without extra elements. The only downside is the lack of automatic adjustment of text fields for lengthy SQL queries. You have to adjust them manually.

There is also a built-in Node cluster. You can pick up several instances, and it will pull out the necessary ETL data. Apache NiFi includes back pressure necessary for a fast connection to MySQL, getting the file, and adding it to the next processor.

To sum up, Apache NiFi is an excellent alternative to other mainstream ETL tools. Its main advantage is the use of over 100 embedded processors for downloading files via HTTP, S3, Google Data Source, and uploading them to MySQL or other data receivers.

Configure the UI, press the RUN button, and if you do it right, everything will work.

{{rr10-4="/custom-block-to-blog/two-page"}}

2. StreamSets

<medium>Pricing:<medium> free

<medium>Official website:<medium> https://streamsets.com

<medium>Useful resources:<medium> documentation, tutorials, developer support forum, source code

<medium>Pros:<medium>

- Every processor comes with individual per-record statistics with visualization for effective debugging

- Attractive user interface

- Excellent ETL tool for streaming or record-based data

<medium>Cons:<medium>

- The absence of reusable JDBC configuration

- Changing the settings of one processor requires a pause in dataflow

StreamSets is the main rival of Apache NiFi. It's difficult to say which of these free ETL tools is better.

The data you input to Streamsets is automatically converted into exchangeable records. The standard format is designed for smooth streaming. Unlike Apache Nifi, this ETL tool doesn't show queues between processors. If you want to use different formats in Apache Nifi, you need to switch between processor versions. Streamsets does not require these manipulations. Instead of stopping only one processor, you need to stop the whole dataflow to change the settings.

It might seem that Streamsets make debugging more challenging, but it is the opposite due to the real-time debugging tool. The user-friendly interface with a live dashboard and statistics helps you notice and fix errors in time. Moreover, you can filter the connections between processors to check for suspicious records. There are four processor variations:

- <medium>The origin processor<medium> receives information from data sources.

- <medium>Processors<medium> that recieve and transform the data.

- <medium>Destinations<medium> record transformed data to external files.

- <medium>Executors<medium> process actions completed by other processors.

Streamsets processors can generate actions and events, including bugs. To track and fix them, you need executors. Some users prefer Apache NiFi, because its design is simpler, and everything you need is the Processors and Controller Services. However, Streamsets has a well-thought-out architecture that isn't difficult to get used to, and its UI looks better.

In my experience, the lack of Controller Services that are quite important for JDBC settings is crucial. Besides, adjusting all JDBC settings for each processor is annoying.

Streamsets checks all processors before you can run the dataflow. This feature seems quite useful, but in my experience, it's a controversial thing. Streamsets doesn't allow you to leave disconnected processors for fixing bugs in the future. All processors must be connected before the dataflow starts. I also missed the possibility of choosing two or more processors at a time. Moving many processors and reorganizing them one-by-one takes too much time and effort.

All in all, it is a mature, open-source ETL tool with a convenient visual dataflow and a modern web interface. I recommend you try both Streamsets and Apache NiFi to find out which of them is better suited to your goals.

3. Apache Airflow

<medium>Pricing:<medium> free

<medium>Official website:<medium> https://airflow.apache.org

<medium>Useful resources:<medium> tutorial

<medium>Pros:<medium>

- Suitable for different tasks

- User-friendly interface for clear visualization

- Scalable solution

<medium>Cons:<medium>

- Isn't suitable for streaming jobs

- Requires additional operators

The modern platform for designing, creating, and tracking workflows is an open-source ETL software. It can be used with cloud services, including GCP, Azure, and AWS. You can also run Airflow on Kubernetes using Astronomer Enterprise.

You can code on Python, but not engage in XML or drag-and-drop GUIs. The workflows are written in Python; however, the steps can be written in any language. Airflow was created as a perfectly flexible task scheduler. One of the top ETL tools is suitable for lots of different purposes. It is used to train ML models, send notifications, track systems and power functions within various APIs.

The main facts about the platform:

{{rr10-5="/custom-block-to-blog/two-page"}}

Apache Airflow was designed to fit four fundamental principles. The software developers aimed to create a <medium>dynamic, extensible, elegant, and scalable<medium> solution. It enables dynamic pipeline generation through Python coding. You can also define your own operators and executors, extend the library according to the needed level of abstraction. The pipelines are clear and accurate because parametrising is present within the platform's core. Thanks to the modular design with a message queue, Airflow can be easily scaled.

Apache Airflow is suitable for most everyday tasks (running ETL jobs and ML pipelines, delivering data, and completing DB backups). However, it's a poor choice for stream jobs.

The platform has a modern UI full of visualization elements. You can see all the running pipelines, track progress, and fix bugs. This helps complete complex tasks on DAGs.

The workflows are constant and stable. The structure is just a little more dynamic than that of an ETL database. If you define workflows as code, they will be more collaborative, versionable, testable, and maintainable.

The platform runs on a private Kubernetes cluster. It also includes resource management tools and analytics (StatsD, Prometheus, Grafana).

What about the ETL testing of Airflow workflows? You can use:

- unit tests

- integration tests

- end-to-end tests (in some cases)

The first type is suitable for checking DAG loading, Python Operator functions, custom Operators, and Bash/EMR scripts. The platform doesn't require any original configurations. The only thing that should be changed is the DB connection string. You need to create an empty database, and give the user permission to CREATE/ALTER, and an airflow command will handle the rest.

To conclude, Apache Airflow is a free, independent framework written on Python. It is a solid example of open-source ETL tools. It can be challenging to run Airflow alone, so you should use different operators.

4. AWS Data Pipeline

<medium>Pricing:<medium> variable

<medium>Official website:<medium> https://aws.amazon.com/datapipeline/

<medium>Useful resources:<medium> documentation, community forum

<medium>Pros:<medium>

- Easy to use ETL technology

- Reasonable price

- Excellent flexibility

<medium>Cons:<medium>

- Doesn't come with many built-in functions

The web service ensures processing and moving data between an AWS computer and various data sources. It provides permanent access to the stored data, as well as its transformation. The final results can be transferred to AWS services, such as Amazon DynamoDB, Amazon RDS, Amazon EMR, Amazon S3. This ETL tool simplifies the process of creating complex data processing workloads. It helps to achieve repeatable, highly available, and reliable case-load.

AWS Data Pipeline enables you to move and process data that was previously locked up in on-premises data silos. According to Amazon, this ETL tool possesses six considerable advantages:

{{rr10-6="/custom-block-to-blog/two-page"}}

AWS Data Pipeline is a reliable service that automatically retries the active processes in case of any failures. You will also receive notifications via Amazon SNS. They can be set for successful runs, delays, or failures.

The drag-and-drop console ensures fast and straightforward pipeline design. The built-in preconditions relieve you from writing any extra logic to use them. With Data Pipeline, you enjoy many popular features, such as scheduling, dependency tracking, and issues handling. The service's flexible design allows smooth processing of numerous files.

This product isn't expensive compared to other ETL tools. AWS Data Pipeline is a serverless orchestration service, and you pay only for what you use. Moreover, there is a free trial version for new users. It's a transparent solution that provides full information on the pipelines and offers complete control over the computational resources.

Finally, I highly recommend this ETL tool for performing pipe jobs. I use it to transfer data for a current project. Although AWS Data Pipeline doesn't have many built-in functions, it provides convenient UI. It can spawn instances and ensure cascading file management. I like this simple, inexpensive, and useful tool with built-in processors that allow you to do everything via UI.

5. AWS Glue

<medium>Pricing:<medium> variable

<medium>Official website:<medium> https://aws.amazon.com/glue/

<medium>Useful resources:<medium> tutorials

<medium>Pros:<medium>

- Supports various data sources

- Good integration with AWS services

<medium>Cons:<medium>

- Requires lots of manual setup

- Poor flexibility

The code-based, serverless ETL alternative to traditional drag-and-drop platforms is an effective but ambitious solution. AWS Glue allows creating and running an ETL job in the AWS Management Console. The service takes data and metadata from AWS, puts it in the catalog, and makes it searchable, queryable, and available for ETL.

The process includes 3 steps:

- classifying data through catalog building (JSON, CSV, Parquet, and other formats are available)

- generating ETL code and editing transformations (written on Scala or Python)

- scheduling and running ETL jobs

According to Amazon, this ETL tool comes with three major benefits.It is:

{{rr10-7="/custom-block-to-blog/two-page"}}

Apache Spark is used as a base of ETL logic. However, you may notice significant differences. The service has "dynamic frame" with specific Glue methods, while Spark uses "data frame".

AWS Glue is a substantial part of the AWS ecosystem. But you should be mindful of its intricacies. The service provides a level of abstraction in which you must identify tables. They represent your CSV files. There is a lot of manual work here, but in the end it will generate the code for Spark and launch it. You can download this code in Scala or Python and change it as you see fit. It's suitable for a wide range of data sources, but the service forces you to choose a specific solution while others may be unavailable.

How to select the best ETL tool?

According to InfoWorld, ETL generates the highest costs in building data warehousing systems. It's the bottleneck that requires the special attention of any web developer. An efficient ETL implementation is your chance to optimize costs and speed up the development process.

When choosing the best of the top ETL tools, consider these five criteria:

- the complexity of your system

- data requirements

- developer's experience

- costs of ETL technologies

- special business needs

I hope this review helps you choose the perfect ETL solution. You can get a free tech consultation on your project from our experts.

{{about-barmin-green="/material/static-element"}}